Software Security: The Big Picture. Part 3

Table of Contents

A Proposal

We’re done with the complaining. Now I’m going to spend a couple of minutes trying to talk about what you might be able to do about this, and what I think is going to happen to our industry over the next couple of years. Instead of looking at this as a sum of components, where we buy a solution for protecting every one of these items, we’re going to branch out and start thinking about this as protecting a system; and then all the things protecting these individual points are features, they’re not products.

If you look at that from an assessment point of view, what I think it’s going to add up to is the ability to track changes across all the devices in a system like this one. Find the vulnerabilities and map those vulnerabilities together to give a single picture of what’s going on in that data center.

Like I said at the beginning of the talk, I’m Fortify’s Chief Scientist, so if all of this was in the bag it wouldn’t be very interesting to me. So, let me tell you about a couple of things that I think need to change in order for us to build technology like this. First of all, we need normalized data center, because we need to have very-very good coverage of what’s going on in that data center. And that means we need automation to know where to go and what to do.

Second of all, we need to tie in to the IT asset management system. IT asset management systems have been around forever, they’ve never worked particularly well. But when I look at those first two points – normalized data center, IT asset management that works – boy…I see cloud. I don’t know what those cloud guys are off doing, but I know what they’re promising, and what they’re promising adds up to those first two points.

Next thing we’ve got to be able to do is look at the code running in that data center and automatically figure out where it’s weak. Because of my background, I think of that as automated static analysis. Maybe you’ve got a better way to do that in real time without disrupting the operating of the system – that would work, too.

Next, we’ve got to have a process-resident software monitor. We need to be able to tie in that custom code to what’s going on in the rest of the data center. And we’ve got to be able to do it automatically.

Lastly, fancy term “cross-domain vulnerability reasoning” – that means we’ve got to be able to take the database and the network and the application, put the results together and prioritize what’s going on with this end-to-end system. The real challenge there though, I think, is going to be standards: do we have ways for those different reporting techniques to come together and speak on common ground?

If you can do it, if you can pull it off you can get results like this one (see image). Instead of saying: “This server has a vulnerability”, we say: “This system has a vulnerability”. Maybe this is the online payment processing system; we see a restricted access database on the backend, and an attacker vector through application that connects to it, with vulnerabilities in the app code and insecure configuration and known CVEs in that app server itself. We can put that picture all together and put that in the right spot on your list of vulnerabilities, rather than reporting all of those problems separately and making YOU bring together the big picture.

One Last Example

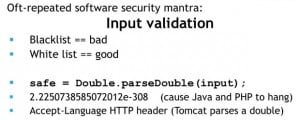

I’m wrapping up. Let me give you an example about how I see this system working; and this vulnerability that I’m going to talk about here is my favorite from 2011, certainly from February I think it’s a good strong contender (see right-hand image). The software security guys are always telling the developers: “Do input validation. Do input validation. Make sure that the person who sends you input isn’t trying to attack you.” And they say: “Blacklist – bad. You’re never going to be able to enumerate all of the bad data coming into the system. Instead – whitelist. Make sure that input looks good.”

Well, if you’d asked me two weeks ago if this line of code looked like good input validation, I would have said: “Thumbs up! This is good stuff!” If you parse that as a double, you know that’s a number. Are all numbers good? Well, maybe; maybe not. But at least you know it’s not some shellcode, so this is the beginning of some really good input validation. I would have said this to any developer who’d ask me.

Well, it turned out, in Java and PHP there’s a magic number – magic number that causes that system to go into an infinite loop. And very quickly an attacker can heat up your CPU by just sending the right small range of numbers to your computer. So, now all of a sudden what looked like something I would have said was good input validation is a really easy way to deny service to your users. That’s a big deal: overnight, what good code looks like has changed.

Now, everybody’s busy trying to release patches for this. What do you do in the meantime? And what do you tell those software developers? Oh, sorry, one more detail there. Not only is this exciting from a Chief Scientist’s perspective, it’s real, too. Major containers were using that HTTP header in order to look at common HTTP headers, and that makes it really easy to wedge common app servers like Tomcat.

So, what are you going to do? Well, if you were thinking about the big picture you could very quickly say which app servers are vulnerable, because I know which ones are, for instance, running Java or PHP. Okay, now that I know which app servers are vulnerable, can I look inside the code running on those servers and say which ones make calls to this vulnerable method? Now that I know that, let me look at the business context and figure out – does a denial-of-service attack against the system matter? Maybe I don’t care about this one today, I’m going to go protect the ones where denial-of-service against the Java app really does matter. And then, I’m going to program that network to at least filter out the known attacks that we started to see in the last week or two. And I can do all of that fast.

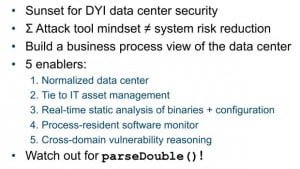

Second piece of feedback that RSA program may have for me: “Brian, you’ve got to put an Apply slide in at the end.” So, here it is. Here’s the Apply slide (see image). This idea of do-it-yourself data center security is at its sunset. We’re still going to have an RSA show floor, and everybody is still going to tell you they’ve got a solution. But I think the idea that we expect the customer to stitch together those solutions has got to end. It comes from an attack tool mindset, the idea that every time somebody finds a new kind of vulnerability we’re going to put another finger in the dike with another solution. Instead, what we’re going to do is build a business process view of the data center, and maybe they don’t even know it but those cloud guys are going to help us do it. They’ll help us do it by normalizing and telling us which assets in the data center belong to which business process.

Then we’re going to get more clever about the way we do analysis on those systems in order to pull out vulnerabilities without disrupting the business process. We’re going to start adding code to and watching the execution of the software those developers write so that we can tie the inside of the application to the rest of the context of that data center. And we’re going to get good at putting everybody in a playing field to give a true prioritization of vulnerability and incident data in the data center.

If that was too much for you and too far-reaching thinking out into 2012 and beyond, then get back to the data center and watch out for your calls to parse a double, because if your CPUs are heating up – that’s probably why. Thank you!

Also Read:

Posted in: News

Leave a Comment (0) ↓